본 글은 gasida님의 k8s-deploy 스터디 자료를 기반으로 작성되었습니다.

Kubernetes HA의 두 가지 로드밸런서

Kubernetes 고가용성 클러스터에는 두 종류의 로드밸런서가 존재합니다.

1.1 Worker Client-Side LB (워커 전용 내부 LB)

위치

- 각 워커 노드 내부 (nginx-proxy static pod)

역할

- 워커 노드의 kubelet, kube-proxy가 Control Plane API 서버에 접근할 때 사용

- localhost:6443으로 수신하여 3개 Control Plane으로 분산

구성

[Worker Node]

┌─────────────────────────────────┐

│ kubelet │

│ kube-proxy │

│ ↓ │

│ localhost:6443 │

│ ↓ │

│ nginx-proxy (static pod) │

│ ├→ CP1:6443 │

│ ├→ CP2:6443 │

│ └→ CP3:6443 │

└─────────────────────────────────┘장점

- 자동 구성 (Kubespray가 알아서 설치)

- 별도 LB 서버 불필요

- 워커별 독립적 (SPOF 없음)

- 네트워크 홉 최소화

단점

- kubectl 등 외부 관리 도구는 사용 불가

- 워커 수만큼 nginx 인스턴스 실행 (리소스 소모)

1.2 Admin LB (관리자용 외부 LB)

위치

- 독립 서버 (admin-lb 노드)

역할

- kubectl, CI/CD, 모니터링 등 외부 사용자, 관리 도구의 API 접근용

- 단일 엔드포인트 제공 (예: admin-lb.example.com:6443)

구성

[Client]

┌────────────────────────────────┐

│ kubectl │

│ CI/CD 파이프라인 │

│ 모니터링 시스템 │

└──────────┬─────────────────────┘

↓

admin-lb:6443 (HAProxy/Nginx)

├→ CP1:6443

├→ CP2:6443

└→ CP3:6443

장점

- 관리 도구들의 단일 진입점

- Control Plane IP 변경 시 LB 설정만 수정

- 접근 제어, 로깅 중앙화 가능

단점

- 별도 서버 필요 (인프라 비용)

- Admin LB 자체가 SPOF (HA 구성 필요)

실습 환경

| Server Name | Role | OS | IP | CPU | MEM | Disk |

| admin-lb | API LB | Ubuntu 22.04 | 192.168.105.151 | 2 | 16G | 20G |

| node1 | control plane | Ubuntu 22.04 | 192.168.105.152 | 4 | 16G | 30G |

| node2 | control plane | Ubuntu 22.04 | 192.168.105.153 | 4 | 16G | 30G |

| node3 | control plane | Ubuntu 22.04 | 192.168.105.154 | 4 | 16G | 30G |

| node4 | worker | Ubuntu 22.04 | 192.168.105.155 | 4 | 16G | 30G |

최종 목표

kubectl [client] → admin-lb:6443 → HAProxy → control plane [node1, node2, node3]

kubelet [node4] → localhost:6443 → nginx → control plane [node1, node2, node3]

실습 1. Worker Client-Side LB로 기본 HA 구성

1) 노드 서버 설정 (node1~node4)

ssh 설정, hosts 도메인 설정

#!/usr/bin/env bash

set -e

echo ">>>> Initial Config Start <<<<"

########################################

# TASK 1. Timezone & NTP

########################################

echo "[TASK 1] Change Timezone and Enable NTP"

timedatectl set-local-rtc 0

timedatectl set-timezone Asia/Seoul

systemctl enable --now systemd-timesyncd >/dev/null 2>&1

########################################

# TASK 2. Disable SWAP (Kubernetes requirement)

########################################

echo "[TASK 2] Disable SWAP"

swapoff -a

sed -i.bak '/\sswap\s/d' /etc/fstab

########################################

# TASK 3. Kernel modules & sysctl

########################################

echo "[TASK 3] Config kernel & module"

cat << EOF > /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

modprobe overlay >/dev/null 2>&1 || true

modprobe br_netfilter >/dev/null 2>&1 || true

cat << EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

sysctl --system

########################################

# TASK 4. /etc/hosts

########################################

echo "[TASK 4] Setting Local DNS Using hosts file"

sed -i '/admin-lb/d;/node[1-4]/d' /etc/hosts

cat << EOF >> /etc/hosts

192.168.105.151 admin-lb

192.168.105.152 node1

192.168.105.153 node2

192.168.105.154 node3

192.168.105.155 node4

EOF

########################################

# TASK 5. SSH configuration

########################################

echo "[TASK 5] Setting SSHD"

echo "root:<PASSWORD>" | chpasswd

sed -i 's/^#\?PermitRootLogin.*/PermitRootLogin yes/' /etc/ssh/sshd_config

sed -i 's/^#\?PasswordAuthentication.*/PasswordAuthentication yes/' /etc/ssh/sshd_config

systemctl restart ssh >/dev/null 2>&1

########################################

# TASK 6. Base packages

########################################

echo "[TASK 6] Install packages"

apt-get update -qq

apt-get install -y \

git \

curl \

ca-certificates \

gnupg \

nfs-common >/dev/null 2>&1

echo ">>>> Initial Config End <<<<"

2) admin-lb 서버 설정

HAProxy 설치/설정, NFS Server 설치/설정, ssh 설정, hosts 도메인 설정, kubespray git clone, kubectl/k9s/helm 설치

#!/usr/bin/env bash

">>>> Initial Config Start <<<<"

########################################

# TASK 1. Time & NTP

########################################

echo "[TASK 1] Timezone & NTP 설정"

timedatectl set-local-rtc 0

timedatectl set-timezone Asia/Seoul

systemctl enable --now systemd-timesyncd >/dev/null 2>&1

echo

########################################

# TASK 2. Disable Firewall (UFW)

########################################

echo "[TASK 2] UFW 비활성화"

ufw disable >/dev/null 2>&1 || true

echo

########################################

# TASK 3. /etc/hosts

########################################

echo "[TASK 3] /etc/hosts 설정"

sed -i '/admin-lb/d;/node[1-4]/d' /etc/hosts

cat << EOF >> /etc/hosts

192.168.105.151 admin-lb

192.168.105.152 node1

192.168.105.153 node2

192.168.105.154 node3

192.168.105.155 node4

EOF

echo

########################################

# TASK 4. Base Packages

########################################

echo "[TASK 4] 기본 패키지 설치"

apt-get update -qq

apt-get install -y \

curl git sshpass ca-certificates \

apt-transport-https gnupg lsb-release \

python3-pip nfs-kernel-server >/dev/null 2>&1

echo

########################################

# TASK 5. kubectl

########################################

echo "[TASK 5] kubectl 설치"

mkdir -p /etc/apt/keyrings

curl -fsSL https://pkgs.k8s.io/core:/stable:/v1.32/deb/Release.key \

| gpg --dearmor -o /etc/apt/keyrings/kubernetes.gpg

echo "deb [signed-by=/etc/apt/keyrings/kubernetes.gpg] \

https://pkgs.k8s.io/core:/stable:/v1.32/deb/ /" \

> /etc/apt/sources.list.d/kubernetes.list

apt-get update -qq

apt-get install -y kubectl >/dev/null 2>&1

echo

########################################

# TASK 6. HAProxy (API LB)

########################################

echo "[TASK 6] HAProxy 설치 및 설정"

apt-get install -y haproxy >/dev/null 2>&1

cat << EOF > /etc/haproxy/haproxy.cfg

global

log /dev/log local0

daemon

maxconn 4000

defaults

mode tcp

log global

option tcplog

timeout connect 10s

timeout client 1m

timeout server 1m

frontend kube-apiserver

bind *:6443

default_backend kube-apiserver-backend

backend kube-apiserver-backend

balance roundrobin

option tcp-check

server node1 192.168.105.152:6443 check

server node2 192.168.105.153:6443 check

server node3 192.168.105.154:6443 check

listen stats

bind *:9000

mode http

stats enable

stats uri /haproxy_stats

EOF

systemctl enable --now haproxy >/dev/null 2>&1

echo

########################################

# TASK 7. NFS Server

########################################

echo "[TASK 7] NFS 서버 설정"

mkdir -p /srv/nfs/share

chown nobody:nogroup /srv/nfs/share

chmod 755 /srv/nfs/share

echo "/srv/nfs/share *(rw,sync,no_root_squash,no_subtree_check)" > /etc/exports

exportfs -rav >/dev/null 2>&1

systemctl enable --now nfs-server >/dev/null 2>&1

echo

########################################

# TASK 8. SSH 설정

########################################

echo "[TASK 8] SSH 설정"

echo "root:<PASSWORD>" | chpasswd

sed -i 's/^#PermitRootLogin.*/PermitRootLogin yes/' /etc/ssh/sshd_config

sed -i 's/^#PasswordAuthentication.*/PasswordAuthentication yes/' /etc/ssh/sshd_config

systemctl restart ssh >/dev/null 2>&1

echo

########################################

# TASK 9. SSH Key 배포

########################################

echo "[TASK 9] SSH Key 생성 및 배포"

ssh-keygen -t rsa -N "" -f /root/.ssh/id_rsa >/dev/null 2>&1

for ip in 151 152 153 154 155; do

echo " - Copy key to 192.168.105.$ip"

sshpass -p '<PASSWORD>' ssh-copy-id -o StrictHostKeyChecking=no root@192.168.105.$ip >/dev/null 2>&1

done

echo

########################################

# TASK 10. Kubespray

########################################

echo "[TASK 10] Kubespray 클론 및 inventory 설정"

git clone -b v2.29.1 https://github.com/kubernetes-sigs/kubespray.git /root/kubespray >/dev/null 2>&1

cp -rfp /root/kubespray/inventory/sample /root/kubespray/inventory/mycluster

cat << EOF > /root/kubespray/inventory/mycluster/inventory.ini

[kube_control_plane]

node1 ansible_host=192.168.105.152 ip=192.168.105.152 etcd_member_name=etcd1

node2 ansible_host=192.168.105.153 ip=192.168.105.153 etcd_member_name=etcd2

node3 ansible_host=192.168.105.154 ip=192.168.105.154 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

node4 ansible_host=192.168.105.155 ip=192.168.105.155

EOF

echo

########################################

# TASK 11. Python Deps

########################################

echo "[TASK 11] Python dependency 설치"

pip3 install -r /root/kubespray/requirements.txt >/dev/null 2>&1

echo

########################################

# TASK 12. Helm

########################################

echo "[TASK 12] Helm 설치"

curl -fsSL https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3 | bash >/dev/null 2>&1

echo

echo ">>>> Initial Config End <<<<"

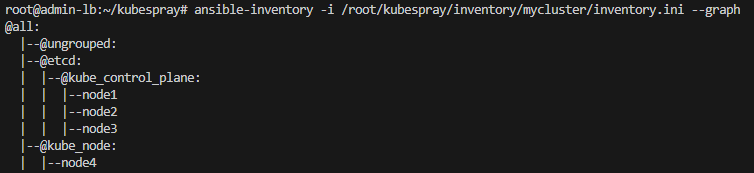

3) 설정 환경 확인

root@admin-lb:~/kubespray-HA# for i in {1..4}; do echo ">> k8s-node$i <<"; ssh node$i hostname; echo; done

>> k8s-node1 <<

node1

>> k8s-node2 <<

node2

>> k8s-node3 <<

node3

>> k8s-node4 <<

node4

root@admin-lb:~/kubespray-HA# for i in {1..5}; do echo ">> k8s-node$i <<"; ssh 192.168.105.15$i hostname; echo; done

>> k8s-node1 <<

admin-lb

>> k8s-node2 <<

node1

>> k8s-node3 <<

node2

>> k8s-node4 <<

node3

>> k8s-node5 <<

node4

root@admin-lb:~/kubespray-HA# which python3 && python3 -V

/usr/bin/python3

Python 3.10.12

root@admin-lb:~/kubespray-HA# cat /root/kubespray/inventory/mycluster/inventory.ini

[kube_control_plane]

node1 ansible_host=192.168.105.152 ip=192.168.105.152 etcd_member_name=etcd1

node2 ansible_host=192.168.105.153 ip=192.168.105.153 etcd_member_name=etcd2

node3 ansible_host=192.168.105.154 ip=192.168.105.154 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

node4 ansible_host=192.168.105.155 ip=192.168.105.155

root@admin-lb:~/kubespray-HA# exportfs -rav

exporting *:/srv/nfs/share

root@admin-lb:~/kubespray-HA# cat /etc/exports

/srv/nfs/share *(rw,sync,no_root_squash,no_subtree_check)

4) cluster, flannel, addons 설정값 변경

# k8s_cluster.yml 설정

sed -i 's|kube_owner: kube|kube_owner: root|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_network_plugin: calico|kube_network_plugin: flannel|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_proxy_mode: ipvs|kube_proxy_mode: iptables|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|enable_nodelocaldns: true|enable_nodelocaldns: false|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

echo "enable_dns_autoscaler: false" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

root@admin-lb:~/kubespray# grep -iE 'kube_owner|kube_network_plugin:|kube_proxy_mode|enable_nodelocaldns:' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

kube_owner: root

kube_network_plugin: flannel

kube_proxy_mode: iptables

enable_nodelocaldns: false

# flannel 설정

echo "flannel_interface: ens33" >> inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

root@admin-lb:~/kubespray# grep "^[^#]" inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

flannel_interface: ens33

# addons

sed -i 's|metrics_server_enabled: false|metrics_server_enabled: true|g' inventory/mycluster/group_vars/k8s_cluster/addons.yml

echo "metrics_server_requests_cpu: 25m" >> inventory/mycluster/group_vars/k8s_cluster/addons.yml

echo "metrics_server_requests_memory: 16Mi" >> inventory/mycluster/group_vars/k8s_cluster/addons.yml

root@admin-lb:~/kubespray# grep -iE 'metrics_server_enabled:' inventory/mycluster/group_vars/k8s_cluster/addons.yml

metrics_server_enabled: true

5) kubespray로 kubernetes 설치

ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml -e kube_version="1.32.9" | tee kubespray_install.log

root@admin-lb:~/kubespray# kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

node1 10.233.64.0/24

node2 10.233.65.0/24

node3 10.233.67.0/24

node4 10.233.66.0/24

root@admin-lb:~/kubespray# ssh node1 etcdctl.sh member list -w table

+------------------+---------+-------+------------------------------+------------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+------------------------------+------------------------------+------------+

| 1d9709e3b87e94d1 | started | etcd2 | https://192.168.105.153:2380 | https://192.168.105.153:2379 | false |

| b65184f1b302bcc4 | started | etcd1 | https://192.168.105.152:2380 | https://192.168.105.152:2379 | false |

| dd3a126bd79df14b | started | etcd3 | https://192.168.105.154:2380 | https://192.168.105.154:2379 | false |

+------------------+---------+-------+------------------------------+------------------------------+------------+

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> node$i <<"; ssh node$i etcdctl.sh endpoint status -w table; echo; done

>> node1 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | b65184f1b302bcc4 | 3.5.25 | 11 MB | true | false | 4 | 7746 | 7746 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> node2 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 1d9709e3b87e94d1 | 3.5.25 | 12 MB | false | false | 4 | 7746 | 7746 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> node3 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | dd3a126bd79df14b | 3.5.25 | 12 MB | false | false | 4 | 7752 | 7752 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

6) Worker Client-Side LB 자동 구성 확인

root@admin-lb:~/kubespray# ssh node4 crictl ps | grep nginx

ad5e49b1e4777 c44d76c3213ea 46 minutes ago Running ingress-nginx-controller 0 523e6682ea310 ingress-nginx-controller-gpb84 ingress-nginx

beebaa63167ed c318e336065b1 About an hour ago Running nginx-proxy 0 1bddacee49bf0 nginx-proxy-node4 kube-system

root@admin-lb:~/kubespray# ssh node4 curl -s localhost:8081/healthz -I

HTTP/1.1 200 OK

Server: nginx

Date: Sun, 08 Feb 2026 03:22:10 GMT

Content-Type: text/plain

Content-Length: 0

Connection: keep-alive

root@admin-lb:~/kubespray# ssh node4 cat /etc/kubernetes/kubelet.conf | grep server

server: https://localhost:6443

root@admin-lb:~/kubespray# ssh node4 cat /etc/kubernetes/kubelet.conf | grep server

server: https://localhost:6443

root@admin-lb:~/kubespray# kubectl get cm -n kube-system kube-proxy -o yaml | grep server

server: https://127.0.0.1:6443

root@admin-lb:~/kubespray# ssh node4 ss -tnlp | grep :6443

LISTEN 0 511 127.0.0.1:6443 0.0.0.0:* users:(("nginx",pid=44082,fd=5),("nginx",pid=44081,fd=5),("nginx",pid=44054,fd=5))

root@admin-lb:~/kubespray# ssh node4 cat /etc/nginx/nginx.conf

...

stream {

upstream kube_apiserver {

least_conn;

server 192.168.105.152:6443;

server 192.168.105.153:6443;

server 192.168.105.154:6443;

}

server {

listen 127.0.0.1:6443;

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

...

7) HA 동작 테스트

# 워커노드 통신 상태 확인

root@admin-lb:~/kubespray# ssh node4 curl -sk https://localhost:6443/version

{

"major": "1",

"minor": "32",

"gitVersion": "v1.32.9",

"gitCommit": "cea7087b31eb788b75934d769a28f058ab309318",

"gitTreeState": "clean",

"buildDate": "2025-09-09T19:37:59Z",

"goVersion": "go1.23.12",

"compiler": "gc",

"platform": "linux/amd64"

}

# 장애 재현 - Control Plane 1번 노드 poweroff

root@admin-lb:~/kubespray# ssh node1 poweroff

# 워커노드 통신 상태 확인

root@admin-lb:/home/ria# ssh node4 curl -sk https://localhost:6443/version

{

"major": "1",

"minor": "32",

"gitVersion": "v1.32.9",

"gitCommit": "cea7087b31eb788b75934d769a28f058ab309318",

"gitTreeState": "clean",

"buildDate": "2025-09-09T19:37:59Z",

"goVersion": "go1.23.12",

"compiler": "gc",

"platform": "linux/amd64"

}

# node1 upstream pool에서 제외 확인

root@node2:~# kubectl logs -f nginx-proxy-node4 -n kube-system

...

2026/02/08 03:42:00 [error] 20#20: *1051 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:42:00 [error] 21#21: *1071 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:42:00 [error] 21#21: *1077 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:42:00 [error] 21#21: *1065 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:42:00 [error] 20#20: *1049 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1471/0, bytes from/to upstream:0/1471

2026/02/08 03:42:00 [error] 20#20: *1082 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:42:00 [error] 20#20: *1061 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/08 03:43:19 [error] 21#21: *1105 upstream timed out (110: Operation timed out) while connecting to upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:0/0, bytes from/to upstream:0/0

2026/02/08 03:43:19 [warn] 21#21: *1105 upstream server temporarily disabled while connecting to upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.105.152:6443", bytes from/to client:0/0, bytes from/to upstream:0/0

...

# kubectl은 실패 => 실습 2에서 admin lb 구성

root@admin-lb:/home/ria# kubectl get pods -A

Unable to connect to the server: dial tcp 192.168.105.152:6443: connect: no route to host

실습 2. Admin LB 추가로 완전한 HA 구성

1) k8s apiserver 호출 설정 (kube-config 수정)

root@admin-lb:/home/ria# sed -i 's/192.168.105.152/192.168.105.151/g' ~/.kube/config

root@admin-lb:/home/ria# cat ~/.kube/config | grep server

server: https://192.168.105.151:6443

root@admin-lb:/home/ria# kubectl get node

E0208 12:55:24.571091 2672733 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.105.151:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.154, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.153, not 192.168.105.151"

E0208 12:55:24.574813 2672733 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.105.151:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.153, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.154, not 192.168.105.151"

E0208 12:55:24.578513 2672733 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.105.151:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.154, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.153, not 192.168.105.151"

E0208 12:55:24.582311 2672733 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.105.151:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.153, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.154, not 192.168.105.151"

E0208 12:55:24.586192 2672733 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"https://192.168.105.151:6443/api?timeout=32s\": tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.154, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.153, not 192.168.105.151"

Unable to connect to the server: tls: failed to verify certificate: x509: certificate is valid for 10.233.0.1, 192.168.105.154, 192.168.105.152, 127.0.0.1, ::1, 192.168.105.153, not 192.168.105.151- TLS 검증 오류 - Kubernetes API 서버의 인증서는 특정 IP와 도메인만 허용

2) Control Plane 서버에 SAN 적용 후 인증서 재발급

- supplementary_addresses_in_ssl_keys 변수에 새 IP와 도메인 추가

- kube-apiserver 인증서 재발급

- 새로 추가된 IP/도메인이 SAN에 반영되어 TLS 오류 해결

root@admin-lb:~/kubespray# echo "supplementary_addresses_in_ssl_keys: [192.168.105.151, k8s-api-srv.admin-lb.com]" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

root@admin-lb:~/kubespray# ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml --tags "control-plane" --list-tasks

root@admin-lb:~/kubespray# ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml --tags "control-plane" --limit kube_control_plane -e kube_version="1.32.9"

# 적용 후

root@admin-lb:~/kubespray# kubectl get node -v=6

I0208 13:25:28.086285 2674444 loader.go:402] Config loaded from file: /root/.kube/config

I0208 13:25:28.086879 2674444 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0208 13:25:28.086899 2674444 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0208 13:25:28.086918 2674444 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0208 13:25:28.086926 2674444 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0208 13:25:28.098864 2674444 round_trippers.go:560] GET https://192.168.105.151:6443/api/v1/nodes?limit=500 200 OK in 8 milliseconds

NAME STATUS ROLES AGE VERSION

node1 Ready control-plane 128m v1.32.9

node2 Ready control-plane 127m v1.32.9

node3 Ready control-plane 127m v1.32.9

node4 Ready <none> 127m v1.32.9

root@admin-lb:~/kubespray# echo "192.168.105.151 k8s-api-srv.admin-lb.com" >> /etc/hosts

root@admin-lb:~/kubespray# sed -i 's/192.168.105.151/k8s-api-srv.admin-lb.com/g' /root/.kube/config

root@admin-lb:~/kubespray# kubectl get node -v=6

I0208 13:26:05.581396 2674450 loader.go:402] Config loaded from file: /root/.kube/config

I0208 13:26:05.581846 2674450 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0208 13:26:05.581869 2674450 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0208 13:26:05.581879 2674450 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0208 13:26:05.581885 2674450 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0208 13:26:05.595140 2674450 round_trippers.go:560] GET https://k8s-api-srv.admin-lb.com:6443/api/v1/nodes?limit=500 200 OK in 8 milliseconds

NAME STATUS ROLES AGE VERSION

node1 Ready control-plane 128m v1.32.9

node2 Ready control-plane 128m v1.32.9

node3 Ready control-plane 128m v1.32.9

node4 Ready <none> 127m v1.32.9

root@admin-lb:~/kubespray# ssh node2 cat /etc/kubernetes/ssl/apiserver.crt | openssl x509 -text -noout

...

X509v3 Subject Alternative Name:

DNS:k8s-api-srv.admin-lb.com, DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, DNS:lb-apiserver.kubernetes.local, DNS:localhost, DNS:node-0.kubernetes.local, DNS:node-1.kubernetes.local, DNS:node1, DNS:node2, DNS:node3, DNS:server.kubernetes.local, IP Address:10.233.0.1, IP Address:192.168.105.153, IP Address:127.0.0.1, IP Address:0:0:0:0:0:0:0:1, IP Address:192.168.105.151, IP Address:192.168.105.152, IP Address:192.168.105.154

...

3) configmap 수정

- kubeadm-config ConfigMap은 자동 업데이트 되지 않음

- SAN을 직접 ConfigMap에 등록하여

kubeadm 업그레이드나 추가 Control Plane 노드 설치 시 새 SAN이 유지되도록 설정

root@admin-lb:~/kubespray# kubectl edit cm -n kube-system kubeadm-config

apiVersion: v1

data:

ClusterConfiguration: |

apiServer:

certSANs:

- kubernetes

- kubernetes.default

- kubernetes.default.svc

- kubernetes.default.svc.cluster.local

- 10.233.0.1

- localhost

- 127.0.0.1

- ::1

- node1

- node2

- node3

- lb-apiserver.kubernetes.local

- 192.168.105.152

- 192.168.105.153

- 192.168.105.154

- server.kubernetes.local

- node-0.kubernetes.local

- node-1.kubernetes.local

- 192.168.105.151

- k8s-api-srv.admin-lb.com

...

4) HA 동작 테스트

# admin-lb 서버

root@admin-lb:~/kubespray# while true; do kubectl get node ; echo ; kubectl get pod -n kube-system; sleep 1; echo ; done

# 워커 노드 (node4)

root@node4:/home/ria# while true; do curl -sk https://127.0.0.1:6443/version | grep gitVersion ; date; sleep 1; echo ; done

# 장애 재현 - 3대의 컨트롤 플레인 서버 중 node1 강제 종료

root@node1:~# poweroff

결과

- kubectl -> TLS handshake timeout 발생. 5초 중단 후 다시 연결

- curl API 요청 -> nginx proxy 기반 통신으로 지연 거의 없음

- 결과적으로 외부 사용자 요청과 워커노드 요청 모두 가용 노드로 전환되어 서비스 지속 (HA 동작 확인)

# admin-lb 서버

...

Unable to connect to the server: net/http: TLS handshake timeout

NAME READY STATUS RESTARTS AGE

coredns-664b99d7c7-lgw7x 1/1 Running 0 114m

coredns-664b99d7c7-zxhhl 1/1 Running 0 49m

kube-apiserver-node1 1/1 Running 1 (26m ago) 131m

kube-apiserver-node2 1/1 Running 1 131m

kube-apiserver-node3 1/1 Running 1 131m

kube-controller-manager-node1 1/1 Running 3 (26m ago) 131m

kube-controller-manager-node2 1/1 Running 2 131m

kube-controller-manager-node3 1/1 Running 2 131m

kube-flannel-4jb7p 1/1 Running 0 114m

kube-flannel-6c986 1/1 Running 1 (26m ago) 114m

kube-flannel-6v7sd 1/1 Running 0 114m

kube-flannel-jdfdd 1/1 Running 0 114m

kube-proxy-7fphd 1/1 Running 0 130m

kube-proxy-8qcl5 1/1 Running 0 130m

kube-proxy-j7wzk 1/1 Running 1 (26m ago) 130m

kube-proxy-jkdp4 1/1 Running 0 130m

kube-scheduler-node1 1/1 Running 2 (26m ago) 131m

kube-scheduler-node2 1/1 Running 1 131m

kube-scheduler-node3 1/1 Running 1 131m

metrics-server-65fdf69dcb-7fvnh 1/1 Running 0 113m

nginx-proxy-node4 1/1 Running 0 130m

# 워커 노드 (node4)

...

"gitVersion": "v1.32.9",

Sun Feb 8 01:28:44 PM KST 2026

"gitVersion": "v1.32.9",

Sun Feb 8 01:28:45 PM KST 2026

"gitVersion": "v1.32.9",

Sun Feb 8 01:28:46 PM KST 2026

...

운영 환경 추천

- Worker Client-Side LB => 활성화

- Admin LB => HA 구성 (Admin LB 2대 + Keepalived VIP)

# Admin LB HA 구성 예시

VIP: 192.168.105.148 (Keepalived Virtual IP)

admin-lb-1: 192.168.105.149 (MASTER)

admin-lb-2: 192.168.105.150 (BACKUP)

# kubectl(외부 사용자) 설정 ~/.kube/config

clusters:

- cluster:

server: https://192.168.105.148:6443

name: kubernetes'Kubernetes' 카테고리의 다른 글

| [Kubernetes] Cluster API 실습 - 쿠버네티스로 쿠버네티스 관리하기 (0) | 2026.02.19 |

|---|---|

| [Kubernetes] 폐쇄망(Air-Gap) 환경에서 k8s 실습 환경 구성 (0) | 2026.02.14 |

| [Kubespray] Kubernetes 자동 설치 실습 (v1.33) (0) | 2026.01.31 |

| [kubeadm] Kubernetes 버전 업그레이드 (1.32 -> 1.35) (0) | 2026.01.22 |

| Kubernetes 설치 과정에서 등장하는 용어 정리 (0) | 2026.01.19 |