본 글은 Kubernetes The Hard Way 공식 문서와 gasida님의 스터디 자료를 기반으로 작성되었습니다.

이번 실습은 kubeadm, kind 같은 자동 설치 도구를 사용하지 않고, Kubernetes 클러스터를 구성하는 모든 컴포넌트를 직접 설치하고 설정하여 내부 동작 원리를 이해하는 것을 목표로 합니다.

단계별 실습을 통해 Kubernetes가 어떤 구성 요소들로 이루어져 있는지, 각 컴포넌트가 어떤 역할을 수행하고 어떻게 서로 통신하는지, 인증서(TLS) / kubeconfig / RBAC 같은 설정들이 왜 필요하며 어디에서 사용되는지 자연스럽게 이해할 수 있습니다.

kubernetes-the-hard-way/docs at master · kelseyhightower/kubernetes-the-hard-way · GitHub

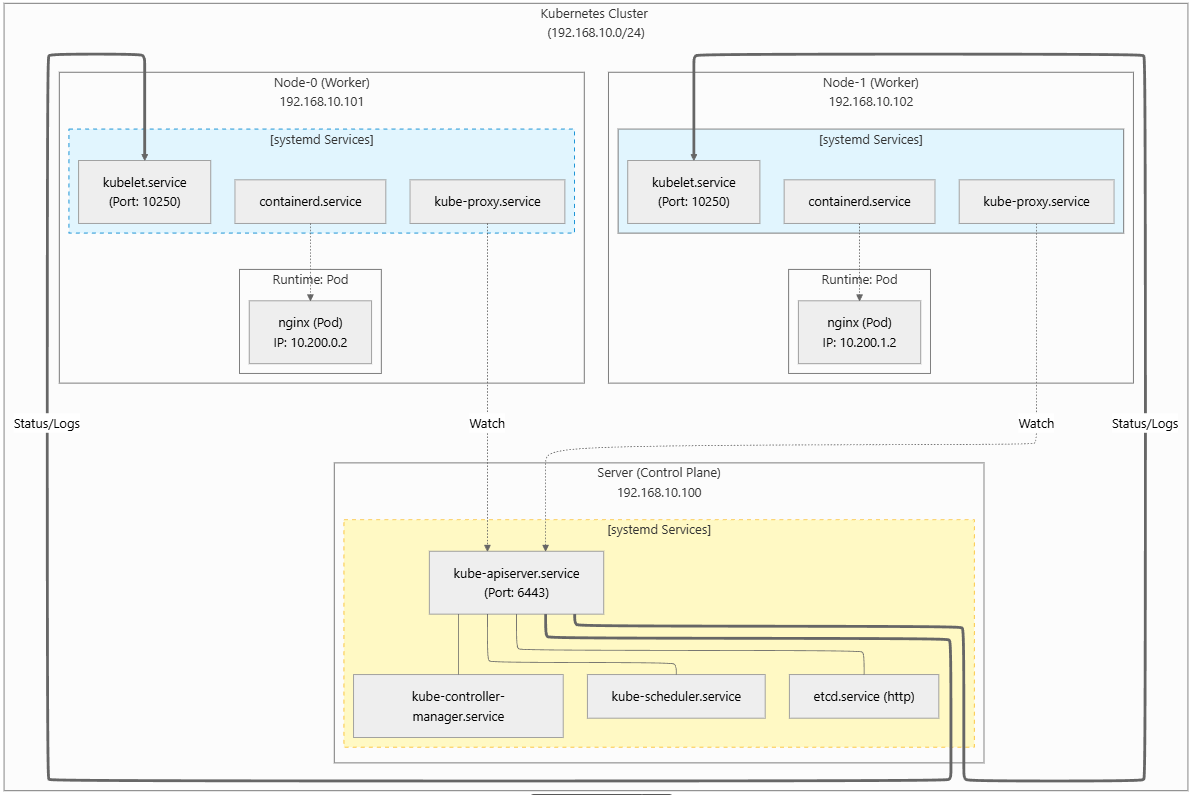

- 'Kubernetes The Hard Way' 실습 최종 아키텍처

1. VM 준비

| Server Name | OS | IP | CPU | MEM | Disk |

| jumpbox | Ubuntu 22.04 | 192.168.105.151 | 2 | 16G | 20G |

| server | Ubuntu 22.04 | 192.168.105.152 | 4 | 16G | 30G |

| node-0 | Ubuntu 22.04 | 192.168.105.153 | 4 | 16G | 30G |

| node-1 | Ubuntu 22.04 | 192.168.105.154 | 4 | 16G | 30G |

2. Jumpbox 서버 세팅

## jumpbox 접속

# root 계정 확인

whoami

root

# 툴 설치

sudo apt update

sudo apt install -y tree git jq unzip vim sshpass

sudo wget https://github.com/mikefarah/yq/releases/latest/download/yq_linux_amd64 \

-O /usr/local/bin/yq && sudo chmod +x /usr/local/bin/yq

# Sync GitHub Repository

git clone --depth 1 https://github.com/kelseyhightower/kubernetes-the-hard-way.git

cd kubernetes-the-hard-way

# Download Binaries : k8s 구성을 위한 컴포넌트 다운로드

# CPU 아키텍처 별로 다름

ARCH=$(dpkg --print-architecture)

echo $ARCH

wget -q --show-progress \

--https-only \

--timestamping \

-P downloads \

-i downloads-$(ARCH).txt

# Directory 생성 후 압축 풀기

mkdir -p downloads/{client,cni-plugins,controller,worker}

tree -d downloads

downloads

├── client

├── cni-plugins

├── controller

└── worker

# tar.gz 압축을 풀어서 상위 디렉토리 1단계 제거 후 downloads/worker/ 안에 배치

tar -xvf downloads/containerd-2.1.0-beta.0-linux-${ARCH}.tar.gz \

--strip-components 1 \

-C downloads/worker

tar -xvf downloads/crictl-v1.32.0-linux-${ARCH}.tar.gz \

-C downloads/worker/

tar -xvf downloads/cni-plugins-linux-${ARCH}-v1.6.2.tgz \

-C downloads/cni-plugins/

tar -xvf downloads/etcd-v3.6.0-rc.3-linux-${ARCH}.tar.gz \

-C downloads/ \

--strip-components 1 \

etcd-v3.6.0-rc.3-linux-${ARCH}/etcdctl \

etcd-v3.6.0-rc.3-linux-${ARCH}/etcd

# 파일 이동, 압축파일 제거

mv downloads/{etcdctl,kubectl} downloads/client/

mv downloads/{etcd,kube-apiserver,kube-controller-manager,kube-scheduler} downloads/controller/

mv downloads/{kubelet,kube-proxy} downloads/worker/

mv downloads/runc.${ARCH} downloads/worker/runc

rm -rf downloads/*gz

# Make the binaries executable.

chmod +x downloads/{client,cni-plugins,controller,worker}/*

# 일부 파일 소유자 변경

chown root:root downloads/client/etcdctl

chown root:root downloads/controller/etcd

chown root:root downloads/worker/crictl

# kubernetes client 도구 - kubectl 설치

cp downloads/client/kubectl /usr/local/bin/

kubectl version --client

Client Version: v1.32.3

Kustomize Version: v5.5.0

3. VM 서버 간 SSH 접속 환경 설정

## jumpbox 접속

# Machine Database (서버 속성 저장 파일 만들기)

# Control Plane은 kubelet이 안돌아서 Pod Subnet 필요 없음

# IPV4_ADDRESS - IP주소

# FQDN - 도메인

# HOSTNAME - 호스트 이름

# POD_SUBNET - 노드별 파드 네트워크 대역 (control-plane은 필요 없음)

cat <<EOF > machines.txt

192.168.105.152 server.kubernetes.local server

192.168.105.153 node-0.kubernetes.local node-0 10.200.0.0/24

192.168.105.154 node-1.kubernetes.local node-1 10.200.1.0/24

EOF

cat machines.txt

# server & node-0 & node-1에 각각 접속해서 root 비밀번호 설정

grep root /etc/shadow

sudo passwd root

# Configuring SSH Access 설정 (SSH 접근 권한 설정)

sed -i \

's/^#*PasswordAuthentication .*/PasswordAuthentication yes/' \

/etc/ssh/sshd_config

sed -i \

's/^#*PermitRootLogin.*/PermitRootLogin yes/' \

/etc/ssh/sshd_config

grep "^[^#]" /etc/ssh/sshd_config

PermitRootLogin yes # 로그인 허용

PasswordAuthentication yes # 비밀번호 인증 가능

systemctl restart sshd

# 다시 jumpbox 설정

# Generate a new SSH key

ssh-keygen -t rsa -N "" -f /root/.ssh/id_rsa

# Copy the SSH public key to each machine

# StrictHostKeyChecking - SSH가 호스트 키를 처음 보는 경우에도 자동으로 yes 처리

while read IP FQDN HOST SUBNET; do

sshpass -p '~~~' ssh-copy-id -o StrictHostKeyChecking=no root@${IP}

done < machines.txt

# 설정 완료 후 확인

while read IP FQDN HOST SUBNET; do

ssh -n root@${IP} cat /root/.ssh/authorized_keys

done < machines.txt

# hostname 설정

while read IP FQDN HOST SUBNET; do

CMD="sed -i 's/^127.0.1.1.*/127.0.1.1\t${FQDN} ${HOST}/' /etc/hosts"

ssh -n root@${IP} "$CMD"

ssh -n root@${IP} hostnamectl set-hostname ${HOST}

ssh -n root@${IP} systemctl restart systemd-hostnamed

done < machines.txt

while read IP FQDN HOST SUBNET; do

ssh -n root@${IP} hostname

done < machines.txt

# Host Lookup Table

echo "" > hosts

echo "# Kubernetes The Hard Way" >> hosts

while read IP FQDN HOST SUBNET; do

ENTRY="${IP} ${FQDN} ${HOST}"

echo $ENTRY >> hosts

done < machines.txt

cat hosts >> /etc/hosts

cat /etc/hosts

127.0.0.1 localhost

127.0.1.1 jumpbox

# The following lines are desirable for IPv6 capable hosts

::1 ip6-localhost ip6-loopback

fe00::0 ip6-localnet

ff00::0 ip6-mcastprefix

ff02::1 ip6-allnodes

ff02::2 ip6-allrouters

# Kubernetes The Hard Way

192.168.105.152 server.kubernetes.local server

192.168.105.153 node-0.kubernetes.local node-0

192.168.105.154 node-1.kubernetes.local node-1

# 모든 서버에 /etc/hosts 복사

while read IP FQDN HOST SUBNET; do

scp hosts root@${HOST}:~/

ssh -n \

root@${HOST} "cat hosts >> /etc/hosts"

done < machines.txt

4. CA 설정 및 TLS 인증서 생성

=> 클러스터 컴포넌트들 간 신원 확인 및 암호화 통신을 위한 작업

4-1) 공개키, 개인키 개념 정리 및 k8s 설치에 필요한 인증서 정보 확인

Public Key로 암호화 하면 Data 보안에 중점을 두고,

Private Key로 암호화 하면 인증 과정에 중점을 둔다.

● Public Key로 암호화

상대방의 Public key로 data를 암호화 하고 전송하면, data를 수신한 사람은 자신의 Private key로 data를 복호화 한다.

Public Key는 널리 배포될 수 있기 때문에 많은 사람들이 한 명의 Private Key 소유자에게 data를 보낼 수 있다.

● Private Key로 암호화 [전자서명]

Private Key의 소유자가 Private Key로 data를 암호화하고 Public Key와 함께 전달한다.

이 과정에서 Public Key와 data를 획득한 사람은 Public key를 이용하여 복호화가 가능하다.

암호화된 data가 Public Key로 복호화 된다는 것은 Public Key와 쌍을 이루는 Private Key에 의해서 암호화 되었다는 것을 의미한다. 즉 data 제공자의 신원 확인이 보장된다는 것이다.

이러한 방법이 공인인증체계에서 활용되는 전자서명의 기본 원리이다.

[인증서 발급 과정]

1. 개인키로 Subject, EKU, Public Key 등 정보가 포함된 CSR 생성

2. CA는 CSR 내용 확인후 CA 개인키로 CSR 서명하고, CA 인증서를 포함하여 최종 인증서 발급

** Self-Signed Certificate Authority(CA) : CA 인증서를 스스로 발급, 테스트/개발 환경에서 유용

** Distinguished Name(DN) : Subject 정보를 표현하는 문자열

** Subject Alternative Name : CN 외에 유효한 이름(도메인, IP) 추가 가능

** TLS Web Client Authentication : 클라이언트 인증용

** TLS Web Server / Client Authentication : 서버 인증용과 클라이언트 인증용 둘 다 가능

| 항목 | 개인키 | CSR | Subject(DN) | EKU | 인증서 |

| Root CA | ca.key | X | ca.crt | ||

| admin | admin.key | admin.csr | CN = admin, O = system:masters | TLS Web Client Authentication | admin.crt |

| node-0 | node-0.key | node-0.csr | CN = system:node:node-0, O = system:nodes | TLS Web Server / Client Authentication | node-0.crt |

| node-1 | node-1.key | node-1.csr | CN = system:node:node-1, O = system:nodes | TLS Web Server / Client Authentication | node-1.crt |

| kube-proxy | kube-proxy.key | kube-proxy.csr | CN = system:kube-proxy, O = system:node-proxier | TLS Web Server / Client Authentication | kube-proxy.crt |

| kube-scheduler | kube-scheduler.key | kube-scheduler | CN = system:kube-scheduler, O = system:kube-scheduler | TLS Web Server / Client Authentication | kube-scheduler.crt |

| kube-controller-manager | kube-controller-manager.key | kube-controller-manager.csr | CN = system:kube-controller-manager, O = system:kube-controller-manager | TLS Web Server / Client Authentication | kube-controller-manager.crt |

| kube-api-server | kube-api-server.key | kube-api-server.csr | CN = kubernetes, SAN: IP(127.0.0.1, 10.32.0.1), DNS(kubernetes,..) | TLS Web Server / Client Authentication | kube-api-server.crt |

| service-accounts | service-accounts.key | service-accounts.csr | CN = service-accounts | TLS Web Client Authentication | service-accounts.crt |

| 항목 | 네트워크 대역 or IP |

| clusterCIDR | 10.200.0.0/16 |

| → node-0 PodCIDR | 10.200.0.0/24 |

| → node-1 PodCIDR | 10.200.1.0/24 |

| ServiceCIDR | 10.32.0.0/24 |

| → api ClusterIP | 10.32.0.1 |

[ca.conf]

[req]

distinguished_name = req_distinguished_name

prompt = no # CSR 생성 시 대화형 입력 없음

x509_extensions = ca_x509_extensions # CA 인증서 생성 시 사용할 확장

[ca_x509_extensions] # CA 인증서 설정 (Root of Trust)

basicConstraints = CA:TRUE # CA 권한 인증서, 이 인증서는 CA 역할 가능

keyUsage = cRLSign, keyCertSign # 다른 인증서를 서명 가능, Kubernetes 모든 인증의 신뢰 루트

[req_distinguished_name]

C = US

ST = Washington

L = Seattle

CN = CA # 클러스터 CA

[admin] # Admin 사용자 (kubectl)

distinguished_name = admin_distinguished_name

prompt = no

req_extensions = default_req_extensions

[admin_distinguished_name]

CN = admin # [K8S] CN → user

O = system:masters # [K8S] O → group , system:masters - Kubernetes 슈퍼유저 그룹, 모든 RBAC 인가 우회

# Service Accounts

#

# The Kubernetes Controller Manager leverages a key pair to generate

# and sign service account tokens as described in the

# [managing service accounts](https://kubernetes.io/docs/admin/service-accounts-admin/)

# documentation.

[service-accounts] # Service Account 서명자

distinguished_name = service-accounts_distinguished_name

prompt = no

req_extensions = default_req_extensions

[service-accounts_distinguished_name]

CN = service-accounts # controller-manager가 사용하는 ServiceAccount 토큰 서명용 인증서 , apiserver에서 --service-account-key-file 로 사용

# Worker Nodes

#

# Kubernetes uses a [special-purpose authorization mode](https://kubernetes.io/docs/admin/authorization/node/)

# called Node Authorizer, that specifically authorizes API requests made

# by [Kubelets](https://kubernetes.io/docs/concepts/overview/components/#kubelet).

# In order to be authorized by the Node Authorizer, Kubelets must use a credential

# that identifies them as being in the `system:nodes` group, with a username

# of `system:node:<nodeName>`.

[node-0] # Worker Node 인증서 (kubelet)

distinguished_name = node-0_distinguished_name

prompt = no

req_extensions = node-0_req_extensions

[node-0_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth # clientAuth: apiserver → kubelet & serverAuth: kubelet HTTPS 서버(10250)

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Node-0 Certificate"

subjectAltName = DNS:node-0, IP:127.0.0.1

subjectKeyIdentifier = hash

[node-0_distinguished_name]

CN = system:node:node-0 # kubelet 사용자 , CN = system:node:<nodeName>

O = system:nodes # Node Authorizer 그룹 ,O = system:nodes

C = US

ST = Washington

L = Seattle

[node-1]

distinguished_name = node-1_distinguished_name

prompt = no

req_extensions = node-1_req_extensions

[node-1_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Node-1 Certificate"

subjectAltName = DNS:node-1, IP:127.0.0.1

subjectKeyIdentifier = hash

[node-1_distinguished_name]

CN = system:node:node-1

O = system:nodes

C = US

ST = Washington

L = Seattle

# Kube Proxy Section

[kube-proxy] # kube-proxy

distinguished_name = kube-proxy_distinguished_name

prompt = no

req_extensions = kube-proxy_req_extensions

[kube-proxy_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Kube Proxy Certificate"

subjectAltName = DNS:kube-proxy, IP:127.0.0.1

subjectKeyIdentifier = hash

[kube-proxy_distinguished_name]

CN = system:kube-proxy

O = system:node-proxier # system:node-proxier ClusterRoleBinding 존재 , 서비스 네트워크 제어 가능

C = US

ST = Washington

L = Seattle

# Controller Manager

[kube-controller-manager]

distinguished_name = kube-controller-manager_distinguished_name

prompt = no

req_extensions = kube-controller-manager_req_extensions

[kube-controller-manager_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Kube Controller Manager Certificate"

subjectAltName = DNS:kube-controller-manager, IP:127.0.0.1

subjectKeyIdentifier = hash

[kube-controller-manager_distinguished_name] # 클러스터 상태 관리, Node, ReplicaSet, SA 토큰 등 관리

CN = system:kube-controller-manager

O = system:kube-controller-manager

C = US

ST = Washington

L = Seattle

# Scheduler

[kube-scheduler]

distinguished_name = kube-scheduler_distinguished_name

prompt = no

req_extensions = kube-scheduler_req_extensions

[kube-scheduler_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Kube Scheduler Certificate"

subjectAltName = DNS:kube-scheduler, IP:127.0.0.1

subjectKeyIdentifier = hash

[kube-scheduler_distinguished_name]

CN = system:kube-scheduler

O = system:kube-scheduler # Pod 스케줄링 전용 권한

C = US

ST = Washington

L = Seattle

# API Server

#

# The Kubernetes API server is automatically assigned the `kubernetes`

# internal dns name, which will be linked to the first IP address (`10.32.0.1`)

# from the address range (`10.32.0.0/24`) reserved for internal cluster

# services.

[kube-api-server] # API Server 인증서

distinguished_name = kube-api-server_distinguished_name

prompt = no

req_extensions = kube-api-server_req_extensions

[kube-api-server_req_extensions]

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth, serverAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client, server

nsComment = "Kube API Server Certificate"

subjectAltName = @kube-api-server_alt_names

subjectKeyIdentifier = hash

[kube-api-server_alt_names] # SAN (Subject Alternative Name) : 모든 내부/외부 접근 주소

IP.0 = 127.0.0.1

IP.1 = 10.32.0.1

DNS.0 = kubernetes

DNS.1 = kubernetes.default

DNS.2 = kubernetes.default.svc

DNS.3 = kubernetes.default.svc.cluster

DNS.4 = kubernetes.svc.cluster.local

DNS.5 = server.kubernetes.local

DNS.6 = api-server.kubernetes.local

[kube-api-server_distinguished_name]

CN = kubernetes

C = US

ST = Washington

L = Seattle

[default_req_extensions] # 공통 CSR 확장 : 대부분 클라이언트 인증서 -> kubelet / apiserver만 serverAuth 추가

basicConstraints = CA:FALSE

extendedKeyUsage = clientAuth

keyUsage = critical, digitalSignature, keyEncipherment

nsCertType = client

nsComment = "Admin Client Certificate"

subjectKeyIdentifier = hash

4-2) 실습 진행

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt |

|

| node-0 | ca.crt node-0.key / node-0.crt (kubelet) |

|

| node-1 | ca.crt node-1.key / node-1.crt (kubelet) |

## jumpbox에서 인증서 생성 작업 후 server, node-0, node-1 각 서버에 필요한 인증서만 복사

## Certificate Authority

# Root CA 개인키 생성 : ca.key

openssl genrsa -out ca.key 4096

# Root CA 인증서 생성

# -x509 : CSR을 만들지 않고 바로 인증서(X.509) 생성, 즉, Self-Signed Certificate

# -noenc : 개인키를 암호화하지 않음, 즉, CA 키(ca.key)에 패스프레이즈 없음

# -config ca.conf : 인증서 세부 정보는 설정 파일에서 읽음 , [req] 섹션 사용됨 - DN 정보 → [req_distinguished_name] , CA 확장 → [ca_x509_extensions]

openssl req -x509 -new -sha512 -noenc \

-key ca.key -days 3653 \

-config ca.conf \

-out ca.crt

## Create Client and Server Certificates

# CA 인증서 생성했으면, 각 Kubernetes 컴포넌트별로 클라이언트 및 서버 인증서와 admin k8s 사용자용 클라이언트 인증서 생성

# admin 관리자 : kubectl 같은 클라이언트가 API 서버와 통신할 때 사용

# 개인키 만들고, 개인키로 CSR 만들고, CSR로 인증서 발급

certs=(

"admin" "node-0" "node-1"

"kube-proxy" "kube-scheduler"

"kube-controller-manager"

"kube-api-server"

"service-accounts"

)

for i in ${certs[*]}; do

openssl genrsa -out "${i}.key" 4096

openssl req -new -key "${i}.key" -sha256 \

-config "ca.conf" -section ${i} \

-out "${i}.csr"

openssl x509 -req -days 3653 -in "${i}.csr" \

-copy_extensions copyall \

-sha256 -CA "ca.crt" \

-CAkey "ca.key" \

-CAcreateserial \

-out "${i}.crt"

done

## Distribute the Client and Server Certificates

# server에 인증서 복사

# kube-api-server와 service-accounts는 클러스터 보안 핵심 기능(TLS 서버, 토큰 서명) 수행

# 실제 키/인증서 파일 필요

scp \

ca.key ca.crt \

kube-api-server.key kube-api-server.crt \

service-accounts.key service-accounts.crt \

root@server:~/

# node-0, node-1에 인증서 복사

# ca.cert는 api-server 인증서 검증 시 사용

# api-server와 kubelet 통신을 위해 각 인증서 서버에 복사 (다음 과정에서 kubeconfig 만들때 --embed-certs=true 옵션을 썼기 때문에 안해도됨)

for host in node-0 node-1; do

ssh root@${host} mkdir /var/lib/kubelet/

scp ca.crt root@${host}:/var/lib/kubelet/

scp ${host}.crt \

root@${host}:/var/lib/kubelet/kubelet.crt

scp ${host}.key \

root@${host}:/var/lib/kubelet/kubelet.key

done

5. API Server와 통신을 위한 Client 인증 설정 파일 작성

=> *.kubeconfig : 클라이언트 컴포넌트가 API 서버에 접근하기 위해 사용하는 인증·연결 설정 파일

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt admin.kubeconfig kube-scheduler.kubeconfig kube-controller-manager.kubeconfig |

|

| node-0 | ca.crt node-0.key / node-0.crt node-0.kubeconfig kube-proxy.kubeconfig |

|

| node-1 | ca.crt node-1.key / node-1.crt node-1.kubeconfig kube-proxy.kubeconfig |

# Generate a kubeconfig file for the node-0 and node-1 worker nodes

# --embed-certs=true : 인증서/키가 kubeconfig 안에 포함되어 있어서 파일 없이도 TLS 인증 가능

for host in node-0 node-1; do

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://server.kubernetes.local:6443 \

--kubeconfig=${host}.kubeconfig

kubectl config set-credentials system:node:${host} \

--client-certificate=${host}.crt \

--client-key=${host}.key \

--embed-certs=true \

--kubeconfig=${host}.kubeconfig

kubectl config set-context default \

--cluster=kubernetes-the-hard-way \

--user=system:node:${host} \

--kubeconfig=${host}.kubeconfig

kubectl config use-context default \

--kubeconfig=${host}.kubeconfig

done

kubectl config get-contexts --kubeconfig=node-0.kubeconfig

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

* default kubernetes-the-hard-way system:node:node-0

# Generate a kubeconfig file for the kube-proxy service

{

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://server.kubernetes.local:6443 \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials system:kube-proxy \

--client-certificate=kube-proxy.crt \

--client-key=kube-proxy.key \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes-the-hard-way \

--user=system:kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default \

--kubeconfig=kube-proxy.kubeconfig

}

# Generate a kubeconfig file for the kube-controller-manager service

{

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://server.kubernetes.local:6443 \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-credentials system:kube-controller-manager \

--client-certificate=kube-controller-manager.crt \

--client-key=kube-controller-manager.key \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config set-context default \

--cluster=kubernetes-the-hard-way \

--user=system:kube-controller-manager \

--kubeconfig=kube-controller-manager.kubeconfig

kubectl config use-context default \

--kubeconfig=kube-controller-manager.kubeconfig

}

# Generate a kubeconfig file for the kube-scheduler service

{

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://server.kubernetes.local:6443 \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config set-credentials system:kube-scheduler \

--client-certificate=kube-scheduler.crt \

--client-key=kube-scheduler.key \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config set-context default \

--cluster=kubernetes-the-hard-way \

--user=system:kube-scheduler \

--kubeconfig=kube-scheduler.kubeconfig

kubectl config use-context default \

--kubeconfig=kube-scheduler.kubeconfig

}

# Generate a kubeconfig file for the admin user

# kubectl 설정을 위한 kubeconfig

{

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://127.0.0.1:6443 \

--kubeconfig=admin.kubeconfig

kubectl config set-credentials admin \

--client-certificate=admin.crt \

--client-key=admin.key \

--embed-certs=true \

--kubeconfig=admin.kubeconfig

kubectl config set-context default \

--cluster=kubernetes-the-hard-way \

--user=admin \

--kubeconfig=admin.kubeconfig

kubectl config use-context default \

--kubeconfig=admin.kubeconfig

}

# Distribute the Kubernetes Configuration Files

ls -l *.kubeconfig

-rw------- 1 root root 9953 Jan 10 09:07 admin.kubeconfig

-rw------- 1 root root 10305 Jan 10 09:07 kube-controller-manager.kubeconfig

-rw------- 1 root root 10187 Jan 10 09:07 kube-proxy.kubeconfig

-rw------- 1 root root 10231 Jan 10 09:07 kube-scheduler.kubeconfig

-rw------- 1 root root 10157 Jan 10 09:04 node-0.kubeconfig

-rw------- 1 root root 10161 Jan 10 09:04 node-1.kubeconfig

for host in node-0 node-1; do

ssh root@${host} "mkdir -p /var/lib/{kube-proxy,kubelet}"

scp kube-proxy.kubeconfig \

root@${host}:/var/lib/kube-proxy/kubeconfig \

scp ${host}.kubeconfig \

root@${host}:/var/lib/kubelet/kubeconfig

done

scp admin.kubeconfig \

kube-controller-manager.kubeconfig \

kube-scheduler.kubeconfig \

root@server:~/

6. ETCD 에 Secret 저장 시 암호화 설정

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt admin.kubeconfig kube-scheduler.kubeconfig kube-controller-manager.kubeconfig encryption-config.yaml |

|

| node-0 | ca.crt node-0.key / node-0.crt node-0.kubeconfig kube-proxy.kubeconfig |

|

| node-1 | ca.crt node-1.key / node-1.crt node-1.kubeconfig kube-proxy.kubeconfig |

# Generate an encryption key

export ENCRYPTION_KEY=$(head -c 32 /dev/urandom | base64)

cat configs/encryption-config.yaml

kind: EncryptionConfiguration # kube-apiserver가 etcd에 저장할 리소스를 어떻게 암호화할지 정의

apiVersion: apiserver.config.k8s.io/v1 # --encryption-provider-config 플래그로 참조

resources:

- resources:

- secrets # 암호화 대상 Kubernetes 리소스 : 여기서는 Secret 리소스만 암호화

providers: # 지원 providers(먼저 입력된 방식부터 적용됨) ex) identity, aescbc, aesgcm, kms v2, secretbox

- aescbc: # etcd에 저장될 Secret을 AES-CBC 방식으로 암호화

keys:

- name: key1 # 키 식별자 (etcd 데이터에 기록됨)

secret: ${ENCRYPTION_KEY}

- identity: {} # Plaintext, 주로 하위 호환성 / 점진적 암호화 목적

# aescbc를 첫 번째에, identity를 두 번째에 배치하는 것은 "새로운 데이터는 무조건 암호화해서 저장하되, 이전에 평문으로 저장되었던 데이터도 문제없이 읽어 들이겠다"는 하위 호환성 전략을 의미

# Create the encryption-config.yaml encryption config file

# envsubst : 환경 변수(환경에서 정의된 $ENCRYPTION_KEY)를 파일 안에서 치환해주는 명령어

envsubst < configs/encryption-config.yaml \

> encryption-config.yaml

scp encryption-config.yaml root@server:~/

7. etcd cluster 부트스트랩

=> server 노드에 etcd 서비스 기동

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt admin.kubeconfig kube-scheduler.kubeconfig kube-controller-manager.kubeconfig encryption-config.yaml |

etcd etcdctl |

| node-0 | ca.crt node-0.key / node-0.crt node-0.kubeconfig kube-proxy.kubeconfig |

|

| node-1 | ca.crt node-1.key / node-1.crt node-1.kubeconfig kube-proxy.kubeconfig |

# http 평문 통신

# hostname 변경하기 controller -> server

ETCD_NAME=server

cat > units/etcd.service <<EOF

[Unit]

Description=etcd

Documentation=https://github.com/etcd-io/etcd

[Service]

Type=notify

ExecStart=/usr/local/bin/etcd \\

--name ${ETCD_NAME} \\

--initial-advertise-peer-urls http://127.0.0.1:2380 \\

--listen-peer-urls http://127.0.0.1:2380 \\

--listen-client-urls http://127.0.0.1:2379 \\

--advertise-client-urls http://127.0.0.1:2379 \\

--initial-cluster-token etcd-cluster-0 \\

--initial-cluster ${ETCD_NAME}=http://127.0.0.1:2380 \\

--initial-cluster-state new \\

--data-dir=/var/lib/etcd

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

# Copy etcd binaries and systemd unit files to the server machine

scp \

downloads/controller/etcd \

downloads/client/etcdctl \

units/etcd.service \

root@server:~/

# 'server'에 접속

ssh root@server

# Install the etcd Binaries

# Extract and install the etcd server and the etcdctl command line utility

mv etcd etcdctl /usr/local/bin/

# Configure the etcd Server

mkdir -p /etc/etcd /var/lib/etcd

chmod 700 /var/lib/etcd

cp ca.crt kube-api-server.key kube-api-server.crt /etc/etcd/

# Create the etcd.service systemd unit file:

mv etcd.service /etc/systemd/system/

# Start the etcd Server

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

# Verification

# List the etcd cluster members

etcdctl member list

6702b0a34e2cfd39, started, server, http://127.0.0.1:2380, http://127.0.0.1:2379, false

8. Controllers 부트스트랩

=> server 노드에 systemd 서비스로 직접 실행 (api-server, scheduler, kube-controller-manager)

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt admin.kubeconfig kube-scheduler.kubeconfig kube-controller-manager.kubeconfig encryption-config.yaml |

etcd etcdctl kubectl kube-apiserver kube-scheduler kube-controller-manager |

| node-0 | ca.crt node-0.key / node-0.crt node-0.kubeconfig kube-proxy.kubeconfig |

|

| node-1 | ca.crt node-1.key / node-1.crt node-1.kubeconfig kube-proxy.kubeconfig |

## jumpbox에서 수행

# kube-apiserver.service 수정 : service-cluster-ip-range 추가

# ca.conf에 정의된 ip 범위 사용

cat ca.conf | grep '\[kube-api-server_alt_names' -A2

cat << EOF > units/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

ExecStart=/usr/local/bin/kube-apiserver \\

--allow-privileged=true \\

--apiserver-count=1 \\

--audit-log-maxage=30 \\

--audit-log-maxbackup=3 \\

--audit-log-maxsize=100 \\

--audit-log-path=/var/log/audit.log \\

--authorization-mode=Node,RBAC \\

--bind-address=0.0.0.0 \\

--client-ca-file=/var/lib/kubernetes/ca.crt \\

--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \\

--etcd-servers=http://127.0.0.1:2379 \\

--event-ttl=1h \\

--encryption-provider-config=/var/lib/kubernetes/encryption-config.yaml \\

--kubelet-certificate-authority=/var/lib/kubernetes/ca.crt \\

--kubelet-client-certificate=/var/lib/kubernetes/kube-api-server.crt \\

--kubelet-client-key=/var/lib/kubernetes/kube-api-server.key \\

--runtime-config='api/all=true' \\

--service-account-key-file=/var/lib/kubernetes/service-accounts.crt \\

--service-account-signing-key-file=/var/lib/kubernetes/service-accounts.key \\

--service-account-issuer=https://server.kubernetes.local:6443 \\

--service-cluster-ip-range=10.32.0.0/24 \\

--service-node-port-range=30000-32767 \\

--tls-cert-file=/var/lib/kubernetes/kube-api-server.crt \\

--tls-private-key-file=/var/lib/kubernetes/kube-api-server.key \\

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

# kube-apiserver가 kubelet(Node)에 접근할 수 있도록 허용하는 '시스템 내부용 RBAC' 설정 확인

cat configs/kube-apiserver-to-kubelet.yaml ; echo

# api-server : Subject CN 확인

openssl x509 -in kube-api-server.crt -text -noout

Subject: CN = kubernetes, C = US, ST = Washington, L = Seattle

# Connect to the jumpbox and copy Kubernetes binaries and systemd unit files to the server machine

scp \

downloads/controller/kube-apiserver \

downloads/controller/kube-controller-manager \

downloads/controller/kube-scheduler \

downloads/client/kubectl \

units/kube-apiserver.service \

units/kube-controller-manager.service \

units/kube-scheduler.service \

configs/kube-scheduler.yaml \

configs/kube-apiserver-to-kubelet.yaml \

root@server:~/

ssh server ls -l /root

total 295436

-rw------- 1 root root 9953 Jan 10 09:09 admin.kubeconfig

-rw-r--r-- 1 root root 1899 Jan 10 09:02 ca.crt

-rw------- 1 root root 3272 Jan 10 09:02 ca.key

-rw-r--r-- 1 root root 271 Jan 10 09:27 encryption-config.yaml

-rw-r--r-- 1 root root 168 Jan 10 06:14 hosts

-rwxr-xr-x 1 root root 93261976 Jan 10 10:29 kube-apiserver

-rw-r--r-- 1 root root 2354 Jan 10 09:02 kube-api-server.crt

-rw------- 1 root root 3268 Jan 10 09:02 kube-api-server.key

-rw-r--r-- 1 root root 1442 Jan 10 10:29 kube-apiserver.service

-rw-r--r-- 1 root root 727 Jan 10 10:29 kube-apiserver-to-kubelet.yaml

-rwxr-xr-x 1 root root 85987480 Jan 10 10:29 kube-controller-manager

-rw------- 1 root root 10305 Jan 10 09:09 kube-controller-manager.kubeconfig

-rw-r--r-- 1 root root 735 Jan 10 10:29 kube-controller-manager.service

-rwxr-xr-x 1 root root 57323672 Jan 10 10:29 kubectl

-rwxr-xr-x 1 root root 65843352 Jan 10 10:29 kube-scheduler

-rw------- 1 root root 10231 Jan 10 09:09 kube-scheduler.kubeconfig

-rw-r--r-- 1 root root 281 Jan 10 10:29 kube-scheduler.service

-rw-r--r-- 1 root root 191 Jan 10 10:29 kube-scheduler.yaml

-rw-r--r-- 1 root root 2004 Jan 10 09:02 service-accounts.crt

-rw------- 1 root root 3272 Jan 10 09:02 service-accounts.key

## server에서 수행

# Create the Kubernetes configuration directory:

mkdir -p /etc/kubernetes/config

# Install the Kubernetes binaries

mv kube-apiserver \

kube-controller-manager \

kube-scheduler kubectl \

/usr/local/bin/

ls -l /usr/local/bin/kube-*

-rwxr-xr-x 1 root root 93261976 Jan 10 10:29 /usr/local/bin/kube-apiserver

-rwxr-xr-x 1 root root 85987480 Jan 10 10:29 /usr/local/bin/kube-controller-manager

-rwxr-xr-x 1 root root 65843352 Jan 10 10:29 /usr/local/bin/kube-scheduler

# Configure the Kubernetes API Server

mkdir -p /var/lib/kubernetes/

mv ca.crt ca.key \

kube-api-server.key kube-api-server.crt \

service-accounts.key service-accounts.crt \

encryption-config.yaml \

/var/lib/kubernetes/

ls -l /var/lib/kubernetes/

total 28

-rw-r--r-- 1 root root 1899 Jan 10 09:02 ca.crt

-rw------- 1 root root 3272 Jan 10 09:02 ca.key

-rw-r--r-- 1 root root 271 Jan 10 09:27 encryption-config.yaml

-rw-r--r-- 1 root root 2354 Jan 10 09:02 kube-api-server.crt

-rw------- 1 root root 3268 Jan 10 09:02 kube-api-server.key

-rw-r--r-- 1 root root 2004 Jan 10 09:02 service-accounts.crt

-rw------- 1 root root 3272 Jan 10 09:02 service-accounts.key

# Create the kube-apiserver.service systemd unit file

mv kube-apiserver.service \

/etc/systemd/system/kube-apiserver.service

# Configure the Kubernetes Controller Manager

mv kube-controller-manager.kubeconfig /var/lib/kubernetes/

mv kube-controller-manager.service /etc/systemd/system/

# Configure the Kubernetes Scheduler

mv kube-scheduler.kubeconfig /var/lib/kubernetes/

mv kube-scheduler.yaml /etc/kubernetes/config/

mv kube-scheduler.service /etc/systemd/system/

# Start the Controller Services

systemctl daemon-reload

systemctl enable kube-apiserver kube-controller-manager kube-scheduler

systemctl start kube-apiserver kube-controller-manager kube-scheduler

# Verify this using the kubectl command line tool

kubectl cluster-info --kubeconfig admin.kubeconfig

Kubernetes control plane is running at https://127.0.0.1:6443

## jumpbox에서 수행

# Verification

curl --cacert ca.crt https://server.kubernetes.local:6443/version

{

"major": "1",

"minor": "32",

"gitVersion": "v1.32.3",

"gitCommit": "32cc146f75aad04beaaa245a7157eb35063a9f99",

"gitTreeState": "clean",

"buildDate": "2025-03-11T19:52:21Z",

"goVersion": "go1.23.6",

"compiler": "gc",

"platform": "linux/amd64"

}

[api-server에서 kubelet 리소스 접근을 위한 RBAC 설정]

# RBAC for Kubelet Authorization

cat kube-apiserver-to-kubelet.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:kube-apiserver-to-kubelet

rules:

- apiGroups:

- ""

resources:

- nodes/proxy

- nodes/stats

- nodes/log

- nodes/spec

- nodes/metrics

verbs:

- "*"

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:kube-apiserver

namespace: ""

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:kube-apiserver-to-kubelet

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: kubernetes

kubectl apply -f kube-apiserver-to-kubelet.yaml --kubeconfig admin.kubeconfig

9. Workers 부트스트랩

=> node-0, node-1 노드에 ‘runc, container networking plugins, containerd, kubelet, kube-proxy’ 설치

| 서버 | 파일 | 바이너리 |

| server | ca.key / ca.crt kube-api-server.key / kube-api-server.crt service-accounts.key / service-accounts.crt admin.kubeconfig kube-scheduler.kubeconfig kube-controller-manager.kubeconfig encryption-config.yaml |

etcd etcdctl kubectl kube-apiserver kube-scheduler kube-controller-manager |

| node-0 | ca.crt node-0.key / node-0.crt node-0.kubeconfig kube-proxy.kubeconfig |

crictl kube-proxy kubelet runc containerd containerd-shim-runc-v2 containerd-stress cni-plugins/* |

| node-1 | ca.crt node-1.key / node-1.crt node-1.kubeconfig kube-proxy.kubeconfig |

crictl kube-proxy kubelet runc containerd containerd-shim-runc-v2 containerd-stress cni-plugins/* |

## jumpbox에서 수행

# Prerequisites

# cni(bridge) 파일과 kubelet-config 파일 작성 및 node-0, node-1에 복사

for HOST in node-0 node-1; do

SUBNET=$(grep ${HOST} machines.txt | cut -d " " -f 4)

sed "s|SUBNET|$SUBNET|g" \

configs/10-bridge.conf > 10-bridge.conf

sed "s|SUBNET|$SUBNET|g" \

configs/kubelet-config.yaml > kubelet-config.yaml

scp 10-bridge.conf kubelet-config.yaml \

root@${HOST}:~/

done

for HOST in node-0 node-1; do

scp \

downloads/worker/* \

downloads/client/kubectl \

configs/99-loopback.conf \

configs/containerd-config.toml \

configs/kube-proxy-config.yaml \

units/containerd.service \

units/kubelet.service \

units/kube-proxy.service \

root@${HOST}:~/

done

for HOST in node-0 node-1; do

ssh root@${HOST} "mkdir -p ~/cni-plugins/"

scp \

downloads/cni-plugins/* \

root@${HOST}:~/cni-plugins/

done

ssh node-0 ls -l /root

ssh node-1 ls -l /root

ssh node-0 ls -l /root/cni-plugins

ssh node-1 ls -l /root/cni-plugins

## 각 노드(node-0, node-1)에 접속 후 실행

# Provisioning a Kubernetes Worker Node

apt-get -y install socat conntrack ipset kmod psmisc bridge-utils

swapoff -a

# Create the installation directories

mkdir -p \

/etc/cni/net.d \

/opt/cni/bin \

/var/lib/kubelet \

/var/lib/kube-proxy \

/var/lib/kubernetes \

/var/run/kubernetes

# Install the worker binaries

mv crictl kube-proxy kubelet runc /usr/local/bin/

mv containerd containerd-shim-runc-v2 containerd-stress /bin/

mv cni-plugins/* /opt/cni/bin/

# Configure CNI Networking

mv 10-bridge.conf 99-loopback.conf /etc/cni/net.d/

modprobe br-netfilter

echo "br-netfilter" >> /etc/modules-load.d/modules.conf

echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.d/kubernetes.conf

echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.d/kubernetes.conf

sysctl -p /etc/sysctl.d/kubernetes.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

# Configure containerd

mkdir -p /etc/containerd/

mv containerd-config.toml /etc/containerd/config.toml

mv containerd.service /etc/systemd/system/

# Configure the Kubelet

mv kubelet-config.yaml /var/lib/kubelet/

mv kubelet.service /etc/systemd/system/

# Configure the Kubernetes Proxy

mv kube-proxy-config.yaml /var/lib/kube-proxy/

mv kube-proxy.service /etc/systemd/system/

# Start the Worker Services

systemctl daemon-reload

systemctl enable containerd kubelet kube-proxy

systemctl start containerd kubelet kube-proxy

## jumpbox에서 수행

# Verification

ssh server "kubectl get nodes -owide --kubeconfig admin.kubeconfig"

NAME STATUS ROLES AGE VERSION

node-0 Ready <none> 23s v1.32.3

--- node-1에서도 똑같이 설정 ---

# Verification

ssh server "kubectl get nodes -owide --kubeconfig admin.kubeconfig"

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node-0 Ready <none> 2m31s v1.32.3 192.168.105.153 <none> Ubuntu 22.04.5 LTS 5.15.0-164-generic containerd://2.1.0-beta.0

node-1 Ready <none> 8s v1.32.3 192.168.105.154 <none> Ubuntu 22.04.5 LTS 5.15.0-164-generic containerd://2.1.0-beta.0

10. 원격 접근을 위한 kubectl 구성 (jumpbox 노드에서 admin 권한으로 API 서버에 접속할 수 있도록 kubectl 설정)

# Configuring kubectl for Remote Access

# You should be able to ping server.kubernetes.local based on the /etc/hosts DNS entry from a previous lab.

curl -s --cacert ca.crt https://server.kubernetes.local:6443/version | jq

# Generate a kubeconfig file suitable for authenticating as the admin user

# kubectl이 kubeconfig를 찾는 기본 규칙

# 1. --kubeconfig 2. $KUBECONFIG 3. /root/.kube/config

kubectl config set-cluster kubernetes-the-hard-way \

--certificate-authority=ca.crt \

--embed-certs=true \

--server=https://server.kubernetes.local:6443

kubectl config set-credentials admin \

--client-certificate=admin.crt \

--client-key=admin.key

kubectl config set-context kubernetes-the-hard-way \

--cluster=kubernetes-the-hard-way \

--user=admin

kubectl config use-context kubernetes-the-hard-way

kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

* kubernetes-the-hard-way kubernetes-the-hard-way admin

# Verification

kubectl version

Client Version: v1.32.3

Kustomize Version: v5.5.0

Server Version: v1.32.3

kubectl get nodes -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

node-0 Ready <none> 23m v1.32.3 192.168.105.153 <none> Ubuntu 22.04.5 LTS 5.15.0-164-generic containerd://2.1.0-beta.0

node-1 Ready <none> 21m v1.32.3 192.168.105.154 <none> Ubuntu 22.04.5 LTS 5.15.0-164-generic containerd://2.1.0-beta.0

11. PodCIDR과 통신을 위한 수동 라우팅 설정 (OS 커널)

## jumpbox에서 수행

# Provisioning Pod Network Routes

{

SERVER_IP=$(grep server machines.txt | cut -d " " -f 1)

NODE_0_IP=$(grep node-0 machines.txt | cut -d " " -f 1)

NODE_0_SUBNET=$(grep node-0 machines.txt | cut -d " " -f 4)

NODE_1_IP=$(grep node-1 machines.txt | cut -d " " -f 1)

NODE_1_SUBNET=$(grep node-1 machines.txt | cut -d " " -f 4)

}

echo $SERVER_IP $NODE_0_IP $NODE_0_SUBNET $NODE_1_IP $NODE_1_SUBNET

192.168.105.152 192.168.105.153 10.200.0.0/24 192.168.105.154 10.200.1.0/24

ssh root@server <<EOF

ip route add ${NODE_0_SUBNET} via ${NODE_0_IP}

ip route add ${NODE_1_SUBNET} via ${NODE_1_IP}

EOF

ssh root@node-0 <<EOF

ip route add ${NODE_1_SUBNET} via ${NODE_1_IP}

EOF

ssh root@node-1 <<EOF

ip route add ${NODE_0_SUBNET} via ${NODE_0_IP}

EOF

# Verification

# 적용 전

ssh server ip -c route

default via 192.168.105.1 dev ens33 proto static

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.152

ssh node-0 ip -c route

default via 192.168.105.1 dev ens33 proto static

10.200.1.0/24 via 192.168.105.154 dev ens33

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.153

ssh node-1 ip -c route

default via 192.168.105.1 dev ens33 proto static

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.154

# 적용 후

ssh server ip -c route

default via 192.168.105.1 dev ens33 proto static

10.200.0.0/24 via 192.168.105.153 dev ens33

10.200.1.0/24 via 192.168.105.154 dev ens33

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.152

ssh node-0 ip -c route

default via 192.168.105.1 dev ens33 proto static

10.200.1.0/24 via 192.168.105.154 dev ens33

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.153

ssh node-1 ip -c route

default via 192.168.105.1 dev ens33 proto static

10.200.0.0/24 via 192.168.105.153 dev ens33

192.168.105.0/24 dev ens33 proto kernel scope link src 192.168.105.154

12. k8s 동작 테스트

# Data Encryption

# Create a generic secret

kubectl create secret generic kubernetes-the-hard-way --from-literal="mykey=mydata"

kubectl get secret kubernetes-the-hard-way

NAME TYPE DATA AGE

kubernetes-the-hard-way Opaque 1 10m

# Print a hexdump of the kubernetes-the-hard-way secret stored in etcd

# etcdctl get … : etcd 내부 key 직접 조회, kubernetes API 우회(매우 강력한 접근)

# Secret 리소스의 etcd 실제 저장 경로: /registry/secrets/default/kubernetes-the-hard-way

ssh root@server \

'etcdctl get /registry/secrets/default/kubernetes-the-hard-way | hexdump -C'

00000000 2f 72 65 67 69 73 74 72 79 2f 73 65 63 72 65 74 |/registry/secret|

00000010 73 2f 64 65 66 61 75 6c 74 2f 6b 75 62 65 72 6e |s/default/kubern|

00000020 65 74 65 73 2d 74 68 65 2d 68 61 72 64 2d 77 61 |etes-the-hard-wa|

00000030 79 0a 6b 38 73 3a 65 6e 63 3a 61 65 73 63 62 63 |y.k8s:enc:aescbc|

00000040 3a 76 31 3a 6b 65 79 31 3a e8 d0 28 d7 17 be fd |:v1:key1:..(....|

00000050 28 8c eb 7c 1b 6d e9 94 39 dd f1 17 00 eb 1d 33 |(..|.m..9......3|

00000060 0f a0 fd 0e d4 d3 06 31 e2 59 8e 92 6d bb 7f f0 |.......1.Y..m...|

00000070 d4 d8 b9 79 95 5b ee e3 52 06 8b 0f 24 dd 67 d1 |...y.[..R...$.g.|

00000080 ed 4f ae 2b a8 7d 6d 73 b9 b1 63 5f b7 a9 09 57 |.O.+.}ms..c_...W|

00000090 3b 14 57 f7 f5 32 a2 32 03 e5 08 45 7b 6c 68 98 |;.W..2.2...E{lh.|

000000a0 13 e3 aa c4 63 37 45 1a ef 1c 38 c1 46 7d 2d 43 |....c7E...8.F}-C|

000000b0 b1 43 36 c7 8f 74 0c 19 5d 50 4b 36 00 58 ff d0 |.C6..t..]PK6.X..|

000000c0 67 5a 90 26 9c 49 6f e1 24 b3 53 66 4e 49 3f 2f |gZ.&.Io.$.SfNI?/|

000000d0 8f a7 10 07 34 12 6b 18 74 f7 c8 c8 3b d4 fa bf |....4.k.t...;...|

000000e0 0b a2 18 0d 55 5d e0 e8 8f 03 1d 6f 05 48 52 ed |....U].....o.HR.|

000000f0 3e 14 96 f0 82 10 41 ca bd 35 d6 b0 a8 ec 29 ca |>.....A..5....).|

00000100 91 17 b2 fb 67 d1 b5 c2 e0 94 92 f4 59 b5 5a 9c |....g.......Y.Z.|

00000110 9e 72 cf 31 99 60 a3 81 fb 74 91 8d a9 e8 81 ea |.r.1.`...t......|

00000120 a5 30 e5 15 46 4b 0e 0c 5a f2 33 73 2a dd b5 2a |.0..FK..Z.3s*..*|

00000130 3f 3c 0f b0 9c b0 64 a5 cd 04 7f 19 4d 35 55 c8 |?<....d.....M5U.|

00000140 20 1d 3e 74 79 78 e4 d7 a8 5e 30 a5 b3 55 90 c6 | .>tyx...^0..U..|

00000150 0e b0 69 69 75 fd 68 0d 4d 0a |..iiu.h.M.|

0000015a

# k8s:enc:aescbc:v1:key1 -> AES-CBC 방식으로 정상 암호화되어 저장되고 있음

# k8s:enc : Kubernetes 암호화 포맷

# aescbc : 암호화 알고리즘 (AES-CBC)

# v1 : encryption provider 버전

# key1 : 사용된 encryption key 이름# Deployments

# Create a deployment for the nginx web server

kubectl create deployment nginx \

--image=nginx:latest

kubectl get pods -l app=nginx

NAME READY STATUS RESTARTS AGE

nginx-54c98b4f84-675g5 1/1 Running 0 21s

ssh node-1 crictl ps

CONTAINER IMAGE CREATED STATE NAME ATTEMPT POD ID POD NAMESPACE

3e0818038f616 2e97da2b9cb35 About a minute ago Running nginx 0 bd15fdb6ac7b9 nginx-54c98b4f84-675g5 default

ssh node-1 brctl show

bridge name bridge id STP enabled interfaces

cni0 8000.0a873741a215 no veth529e7a67

ssh node-1 ip addr

3: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 0a:87:37:41:a2:15 brd ff:ff:ff:ff:ff:ff

inet 10.200.1.1/24 brd 10.200.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::887:37ff:fe41:a215/64 scope link

valid_lft forever preferred_lft forever

4: veth529e7a67@if2: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue master cni0 state UP group default qlen 1000

link/ether 1a:46:4b:d4:3b:fe brd ff:ff:ff:ff:ff:ff link-netns cni-eeb5dfca-5453-e0ed-e5e7-492ecda4830e

inet6 fe80::1846:4bff:fed4:3bfe/64 scope link

valid_lft forever preferred_lft forever# Port Forwarding

# Retrieve the full name of the nginx pod

POD_NAME=$(kubectl get pods -l app=nginx \

-o jsonpath="{.items[0].metadata.name}")

# Forward port 8080 on your local machine to port 80 of the nginx pod

kubectl port-forward $POD_NAME 8080:80

Forwarding from 127.0.0.1:8080 -> 80

Forwarding from [::1]:8080 -> 80

# In a new terminal make an HTTP request using the forwarding address

curl --head http://127.0.0.1:8080

HTTP/1.1 200 OK

Server: nginx/1.29.4

Date: Sat, 10 Jan 2026 14:12:21 GMT

Content-Type: text/html

Content-Length: 615

Last-Modified: Tue, 09 Dec 2025 18:28:10 GMT

Connection: keep-alive

ETag: "69386a3a-267"

Accept-Ranges: bytes

# Expose the nginx deployment using a NodePort service

kubectl get service,ep nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/nginx NodePort 10.32.0.165 <none> 80:30461/TCP 26s

NAME ENDPOINTS AGE

endpoints/nginx 10.200.1.2:80 26s

NODE_PORT=$(kubectl get svc nginx --output=jsonpath='{range .spec.ports[0]}{.nodePort}')

NODE_NAME=$(kubectl get pods \

-l app=nginx \

-o jsonpath="{.items[0].spec.nodeName}")

# Make an HTTP request using the IP address and the nginx node port

curl -I http://${NODE_NAME}:${NODE_PORT}

HTTP/1.1 200 OK

Server: nginx/1.29.4

Date: Sat, 10 Jan 2026 14:13:50 GMT

Content-Type: text/html

Content-Length: 615

Last-Modified: Tue, 09 Dec 2025 18:28:10 GMT

Connection: keep-alive

ETag: "69386a3a-267"

Accept-Ranges: bytes

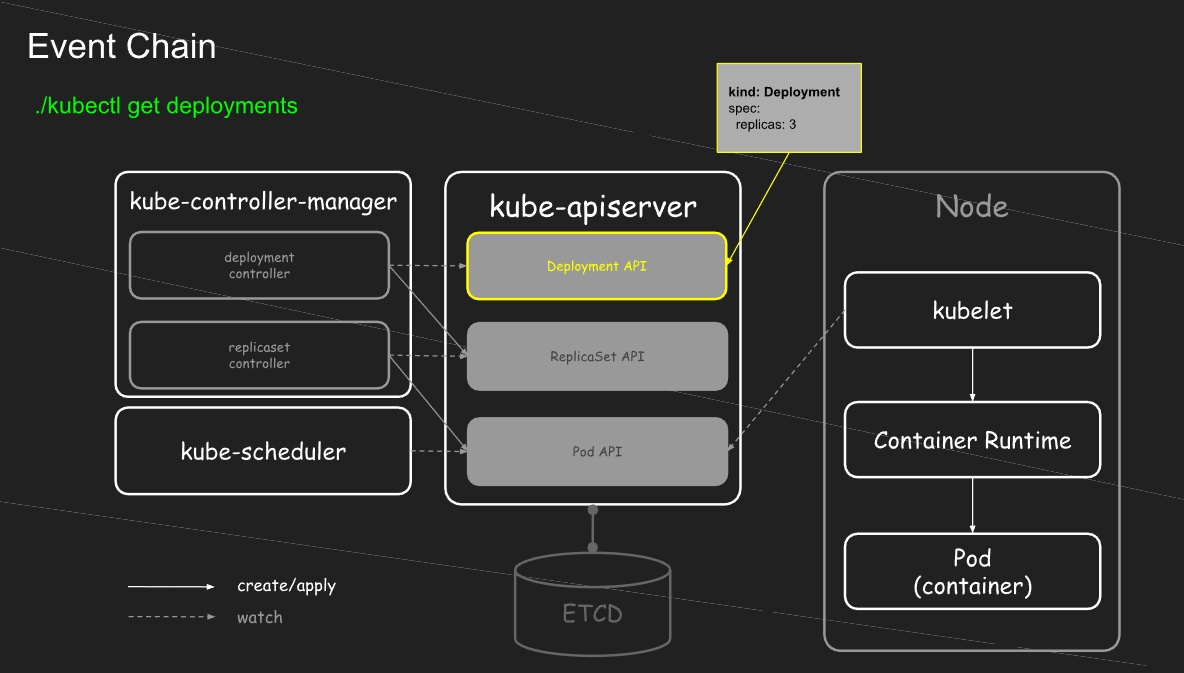

k8s의 각 컴포넌트들이 필요한 이유

(참고 문서 : https://netpple.github.io/docs/deepdive-into-kubernetes/k8s-hardway)

| 기본적인 컨테이너 실행 과정 | |

| image -> bundle -> run | |

| containerd -> RunC | containerd 설치 |

| containerd는 OCI runtime인 runc를 호출하여 image로부터 생성한 bundle을 실제 컨테이너 프로세스로 실행한다 |

|

| 노드 단위의 컨테이너,pod 상태 관리자가 필요하다 | kubelet 기동 |

| kubelet -> containerd -> RunC | |

| kubelet 기동 후, pod yaml파일을 manifests 경로에 넣어주면 kubelet이 yaml 파일을 보고 pod 생성 삭제도 마찬가지로 manifests에서 yaml 파일 지워주기만 하면 kubelet 감지하여 pod 삭제 |

|

| node가 많아지면서 각 kubelet의 상태 보고를 한 곳에서 일관되게 저장하고 조회하고 싶다 | kube-apiserver,etcd 기동 |

| kubelet이 pod 상태 보고하면 API Server에서 받아서 etcd에 저장 kubelet을 관리한다기 보다는 상태수집 & 저장 |

|

| pod status Failure -> Pod 내부에서 API Server 호출 시 권한이 필요하다 | service account 생성 |

| kube-controller-manager 있으면 SA 자동 생성해줌 | |

| 지금까지 curl로 API Server에 매번 요청했는데 입력하기 번거롭다 | kubectl 설치 |

| pod 생성 시 배포 노드를 직접 입력하지 않고 상황에 따라 결정하고 싶다 | kube-scheduler 기동 |

| 배포는 완료됐는데 요구 명세서가 바뀌면 자동으로 상태를 맞춰줬으면 좋겠다 | kube-controller-manager 기동 |

| scheduler만 있을땐 taint 문제로 수동으로 pod toleration 달아줘야 했지만 kube-controller-manager가 기동되면 내부 컨트롤러가 자동으로 노드 상태를 보고 taint 제거 |

|

출처

kubernetes-the-hard-way/docs at master · kelseyhightower/kubernetes-the-hard-way · GitHub

kubernetes-the-hard-way/docs at master · kelseyhightower/kubernetes-the-hard-way

Bootstrap Kubernetes the hard way. No scripts. Contribute to kelseyhightower/kubernetes-the-hard-way development by creating an account on GitHub.

github.com

공개키(Public Key) and 개인키( .. : 네이버블로그

공개키(Public Key) and 개인키( Private Key)

공개키는 은행의 계좌번호와 유사하고, 개인키는 비밀번호 PIN과 유사하다. 대칭키와 비대칭키(공개키)...

blog.naver.com

1편. 쿠버네티스 hard?way

쿠버네티스 메인 컴포넌트들을

netpple.github.io

[Kubernetes] Cluster: 내 손으로 클러스터 구성하기 - 0. Overview - Eraser’s StudyLog

[Kubernetes] Cluster: 내 손으로 클러스터 구성하기 - 0. Overview

Kubernetes The Hard Way를 따라 자동화 도구 없이 쿠버네티스 클러스터를 손으로 직접 구성해 보자.

sirzzang.github.io

'Kubernetes' 카테고리의 다른 글

| [Kubespray] Kubernetes HA 구성 실습 (0) | 2026.02.07 |

|---|---|

| [Kubespray] Kubernetes 자동 설치 실습 (v1.33) (0) | 2026.01.31 |

| [kubeadm] Kubernetes 버전 업그레이드 (1.32 -> 1.35) (0) | 2026.01.22 |

| Kubernetes 설치 과정에서 등장하는 용어 정리 (0) | 2026.01.19 |

| [Ansible] 개념 정리 및 실습 (0) | 2026.01.17 |